Stat Fails On The News

Journalists are not perfect, especially at maths. Sit back and relax, as you observe the most common statistics failures portrayed in the media

In the modern world, everyone is inundated with statistics. Numbers and figures pop up everywhere, from the newspapers to the workplace, and don’t get anyone started on the various charts presented daily.

Unfortunately, most people don’t fully understand the concepts of statistics. Do you know when to use the mode instead of the mean? What does the standard deviation have to do with the median? Can you determine why it is better to use a bar chart instead of a pie chart? Unless you are a mathematics professor or have tried to cheat by googling the answers, it is unlikely you know what the answers are.

This is a shame, because statistics has become an integrated part of society, and has been more present in media and politics. Bad-faith actors and politicians can manipulate statistics to their advantage, and journalists can make silly mistakes that could mislead viewers through misrepresentation.

But it is not all bad news, inside the data journalism department of the newsroom, editors and reporters are most alert to seven forms of mistakes, deliberate or accidental, that could affect their reporting and presentation. Armed with the correct knowledge, anyone can pierce through the noise, and make their judgments based on mathematical truths, not just the political spin from the news. These are the seven sins of data journalism you should look out for.

Wild Guessing

Wild guessing is often an unspoken problem inside many statistics represented in the media. When it comes to certain social problems like crime or drug abuse, there are usually not many good statistics available because of the nature of the matter. Data journalists call it “the dark figure.”

Journalists tend to avoid “the dark figure” by coming up with a figure to show the problem. Sometimes it could be educated guesses by experts in the field, and in other cases, they are wild guessing with unsound assumptions. What’s even worse is that the numbers are usually underestimated or exaggerated depending on who provides the figures. For example, politicians can both exaggerate or underestimate the crime rate to fit their political agenda, the police can exaggerate the crime rate to persuade politicians to provide more funding for their organization, and the media can use exaggerated figures related to the crime rate to write up hysterical headlines that crumble under the smallest scrutiny.

Ill-defined problems

Statistics can also often involve ill-defined problems, which is deliberately vague given these are broad definitions that encompass more events and provide larger estimates of a problem’s size. However, using this tactic might distort the understanding of the real problem, and subject the data to interpretation instead of an accurate presentation of current events.

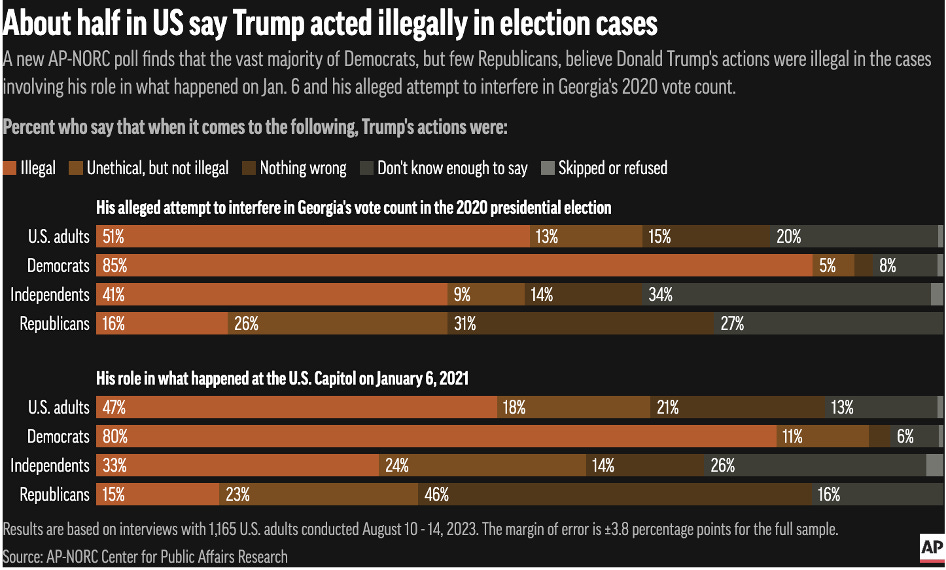

For example, back in August 2023, the Associated Press website published an article under the headline “Americans are divided along party lines over Trump’s actions in election cases, AP-NORC poll shows.” Based on the graph provided by the outlet as shown below, the division alluded to by the AP is murky.

If you are interpreting the headline through the wording of “Trump’s actions in election cases,” you can argue as the title did that the country is divided in half when it comes to deciding Trump’s actions in Georgia and the Capitol riots as illegal. However, from another standpoint, if you interpret the headline with the notion of people believing what Trump did was wrong, the data will give you an entirely different rendition.

When combining both “illegal” and “unethical but not illegal,” 64% believe to some degree Trump’s actions in Georgia are wrong, while 65% believe to some degree Trump’s actions concerning the Capitol riots are wrong. Comparing these data interpretations to the percentage that believes Trump did nothing wrong, you can see a wide margin of 44% in both cases supporting the notion that Trump enacted unethical conduct.

Invalid Measurements

In many news reports, you might be familiar with the term “research shows that…” However, measurement decisions are usually hidden in the media, either going unmentioned or buried as a hyperlink inside a news article. Measurements involve journalists deciding how to count, and it is the process of counting the numbers that can be susceptible to invalidity.

Data journalism experts see the problem with invalid measurements generally coming from survey research or questions asked by pollsters. For many questions regarding social issues, public attitudes are often categorized into simple Yes or No questions. However, public attitudes can be more nuanced than a simple two-way answer. For example on abortion, somebody who is pro-life can be supportive of abortion as a universal right but would not undergo the procedure themselves for religious or ethical reasons. Unfortunately, the nuanced attitudes many hold on such social issues are not portrayed honestly in polling questions, hence creating a narrative of dividing sides instead of compromises in the middle.

Furthermore, how questions are worded matters. Taking an example from the author Joel Best in his book Damned Lies and Statistics, people who are supportive of gun control can ask the question “Do you favor cracking down against illegal gun sales?” On the other side, people who are against gun-control measures could ask “Would you favor or oppose a law giving police the power to decide whether you may or may not own a firearm?” Both sides use purposefully leading language, tying up the issue of gun control with another social issue that resonates with interviewees and can result in a more biased answer.

Poor Sampling

Sampling is important in data journalism, especially in news reports featuring polling data and results. The sample size is important. Usually, the more people sampled for a survey, the better. However, journalists also need to keep in mind whether the sampling is representative or biased.

For example, in US politics, pollsters divide people’s political affiliations into Republican, Democrat, or Independent. However, that ignores how independents behave, and that uncertainty matters to whether the data is representative or not. Some independents can be liberal and consistently vote Democrat, other independents can be more conservative but choose to vote Democrat for their reasons. That is similarly applied to independents who want to vote for the Republicans, with some voting for the GOP since they are conservative, and there can be center-left independents who choose to vote Republican because of their policies.

Meanwhile, the way of sampling matters. Convenience sampling involves using respondents who are the most conveniently close to the interviewer at a particular time, for example asking anyone who wants to take a survey conducted at a street at Causeway Bay on their shopping intentions. Randomized sampling gets more complicated over how respondents will be selected, while specific populations can be crucial in obtaining certain data related to their social groups. One big issue journalists want to avoid is overgeneralization, in which a single group of data is viewed as an invariable rule.

Misleading Representation

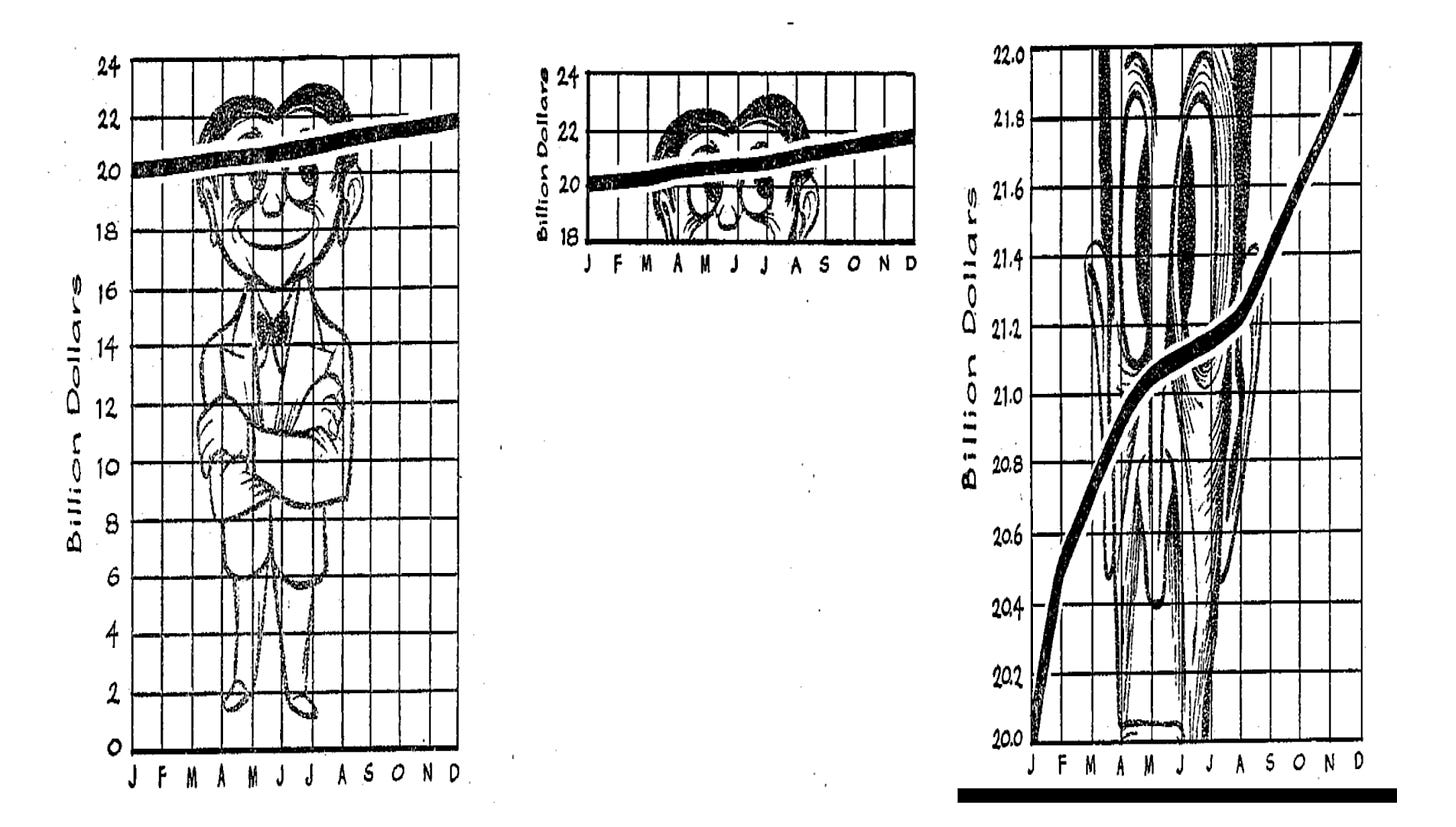

This is the most common sin of data journalism, especially given it is seen in news articles, TV reports, and other forms of media. The graph shown below is from Darrell Huff who wrote the book How to Lie with Statistics, which portrays the most common ways data in graphs can be misinterpreted.

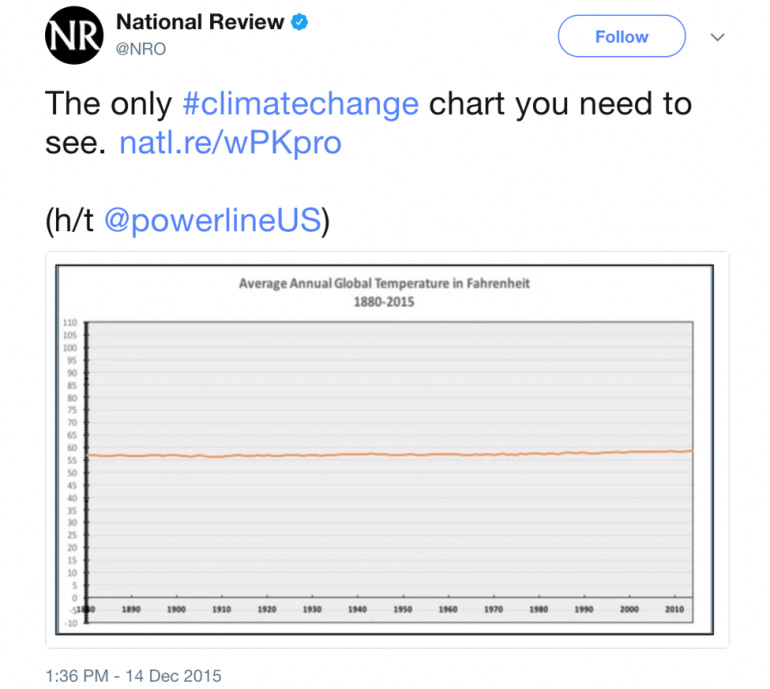

In some cases, data can be selectively chosen and presented to make a point. Take the infamous case of the National Review graph on climate change. For context, the National Review is a conservative magazine that has regularly criticized the science of climate change and rejected the scientific consensus on the issue. Here is a similar

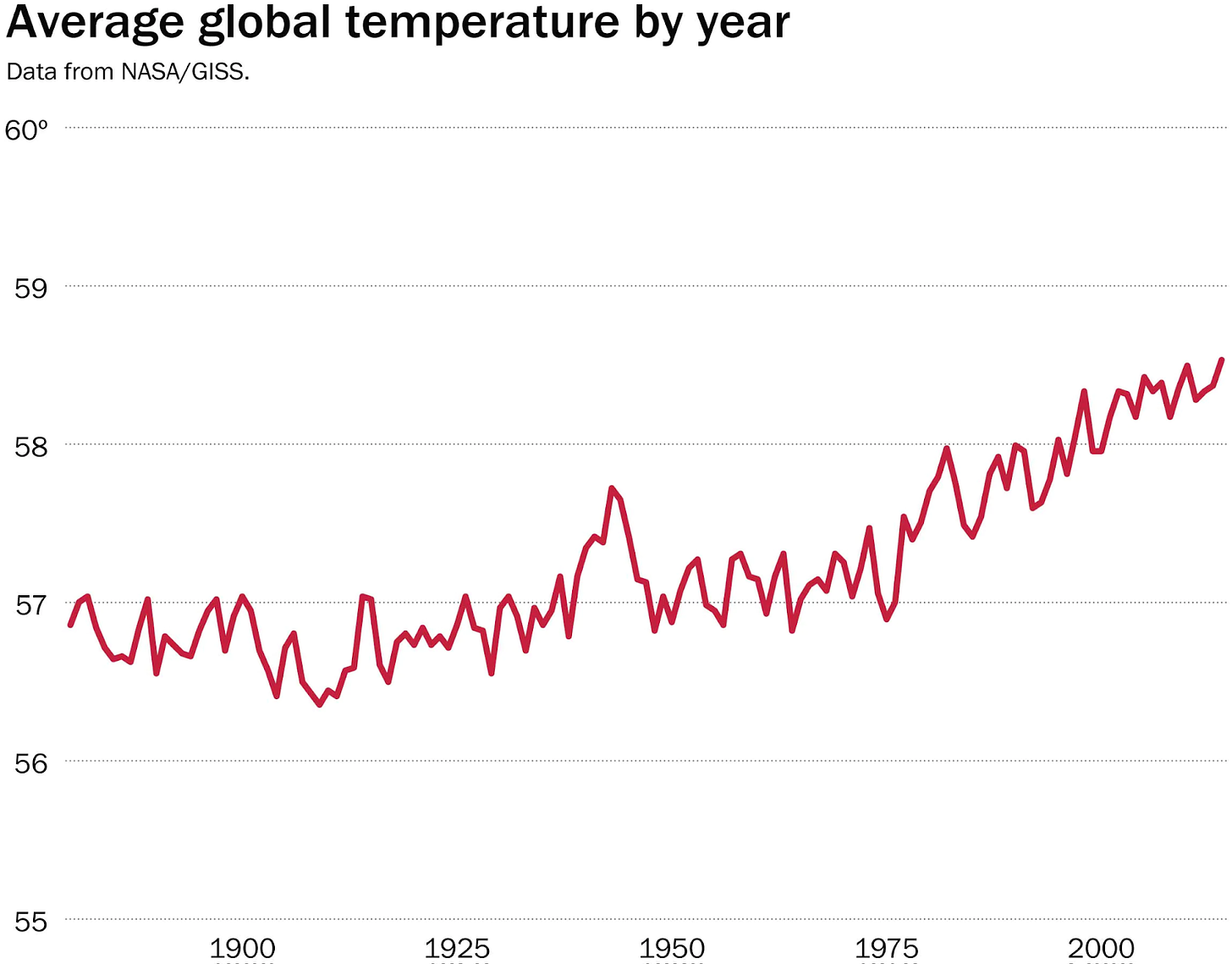

The data represented in the graph is correct, but the data journalist who worked on the story deliberately altered the scale to challenge the notion that there is an increase in average global temperature by year. If you can look closely at the Y-axis, the temperature ranges from 0 to 110 degrees Fahrenheit. With the graph above, the writers are engaging in pure obfuscation and misleading readers. For reference, here is another way of presenting the graph above in a less misleading manner from the Washington Post.

Bias, Flawed Research Design & Misinterpretation

This part combines the last two sins of data journalism into one. Misinterpretation is a stand-alone and technical sin given its nature. This sin concerns averages like the mean, mode, and median; percentages represented in table form; percent points increase or percent increase; how the proportion and rate are represented; whether the risks and odds are absolute and relative; how the confidence interval is represented; and whether causation and association are connected.

Various forms of biases could be in play that affect the work of data journalists. A non-response bias, or participation bias, is caused by a disproportionate possession of certain traits by the respondents which affects the outcome. Responsive bias happens given the wide range of tendencies respondents would choose to falsely answer questions. The main factor is the “socially desirable response,” in which the respondent would respond based on how their social circles would prefer to respond to a particular question. Selection bias and publication could be caused by the deliberate selection of particular data and participants, while a biased control also affects the result of data production. Not to mention the presence of a conflict of interest worsens potential biases, as they can be consciously or subconsciously injected into data collection and processing by the people involved in making them.

Research design is also key. For example, studies that are retrospective or prospective have to come to grips with how the aims of their research are related to the context and time of a particular study. Regardless if a study is conducted through a laboratory experiment, an observational study, or a cohort study, each research design has specific flaws in how the design is tailored that can inadvertently affect the data collection and results.