Call it a wild week in the business world, specifically in OpenAI. In just under a week, it went through firings, resignations, threats of resignations, Microsoft interventions, and rehirings.

It was so complex I won’t write another summary of events. If you are curious, my Memeing The News wrap-up gives you the big picture.

Late Friday, OpenAI CEO Sam Altman stepped down from his role (which is code for fired), after he lost the confidence of his board due to lying to them. A corporate memo first reported by Axios the next day said there was no malfeasance in firing Altman, as the move blindsighted Microsoft and the AI industry. The co-founder of OpenAI Greg Brockman quit just hours after Altman’s departure, with the company’s investors pressuring the OpenAI board to reinstate Altman.

In a sudden twist from the New York Times, Saturday night came reports that both Brockman and Altman were said to return to OpenAI and were discussing it with the company board. Then in another surprising twist, Bloomberg reported the company board didn’t agree to Altman’s reinstatement terms and hired former Twitch chief Emmett Shear as chief executive officer, and Altman and Brockman will instead lead a new in-house AI team at Microsoft.

Just when that was not dramatic enough, on the same Monday Altman was announced to not have rejoined OpenAI, 500 employees of OpenAI signed a letter to quit if Altman is not reinstated. Bloomberg then reported on Tuesday Altman and the OpenAI board were renegotiating conditions to bring back the former CEO.

Then in another gigantic twist, Altman and OpenAI announced the former fired boss will return to the company on Wednesday under a new supervised board.

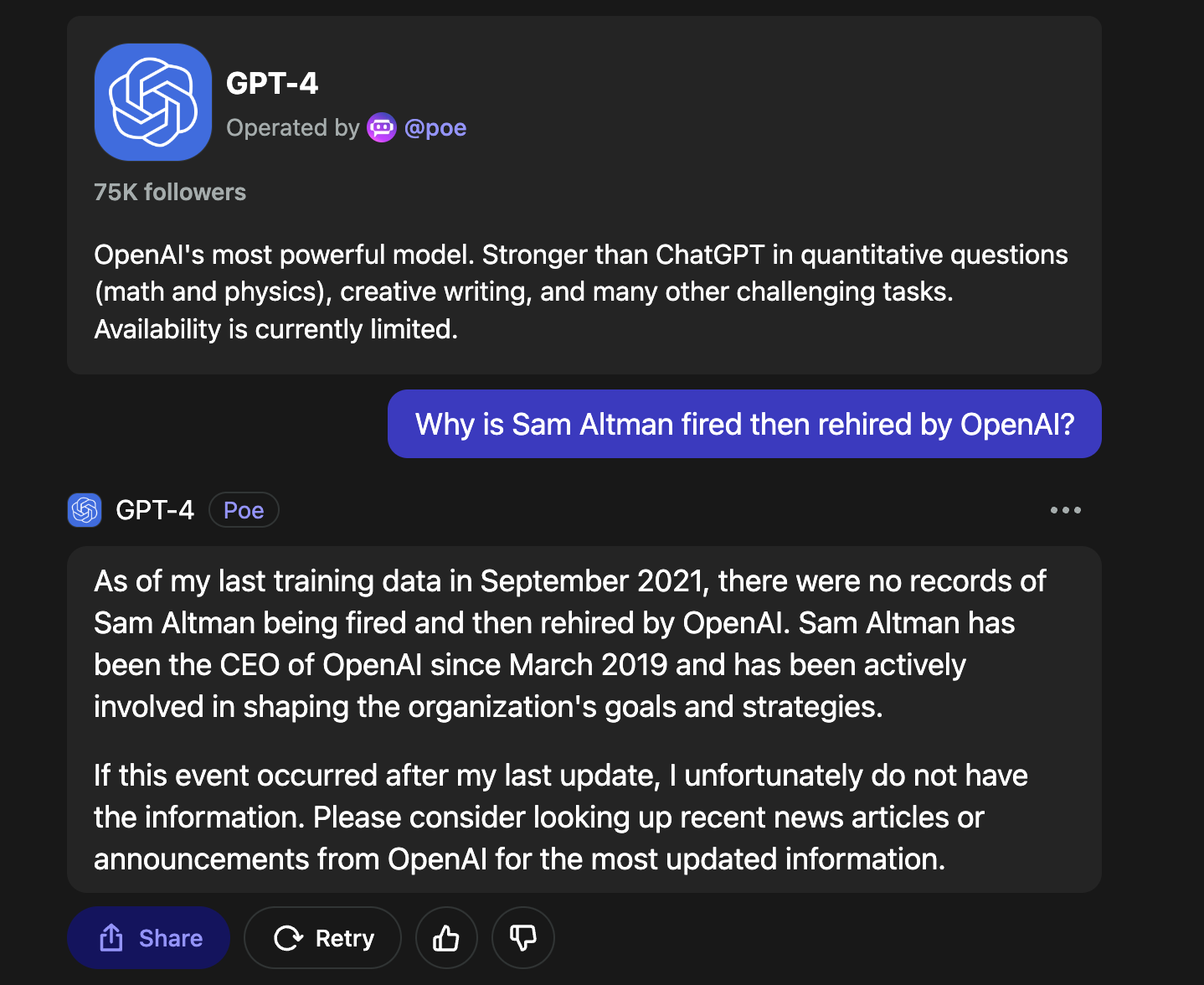

Even after Altman’s rehiring, a question still lingers. Why was he fired? As a training journalist myself, I tried contacting some credible sources.

Okay, maybe the most powerful AI in use created by OpenAI doesn’t have the solutions. But a Reuters article does provide some tantalizing details into the background of the firing. If you read it, it is a big if true story.

Ahead of OpenAI CEO Sam Altman’s four days in exile, several staff researchers wrote a letter to the board of directors warning of a powerful artificial intelligence discovery that they said could threaten humanity, two people familiar with the matter told Reuters.

The previously unreported letter and AI algorithm were key developments before the board's ouster of Altman, the poster child of generative AI, the two sources said. Prior to his triumphant return late Tuesday, more than 700 employees had threatened to quit and join backer Microsoft (MSFT.O) in solidarity with their fired leader.

The sources cited the letter as one factor among a longer list of grievances by the board leading to Altman's firing, among which were concerns over commercializing advances before understanding the consequences. Reuters was unable to review a copy of the letter. The staff who wrote the letter did not respond to requests for comment.

Now, I don’t have time to go in-depth into the business structure of OpenAI, if you are curious this TLDR News video explains it very well.

The big lesson we can learn about AI this week is that whatever comes next is less in the control of governments, and more in the control of corporations and businesses.

Governments around the world are aiming their firepower of regulations and concerns on AI with the same level of intensity as how they are handling climate change. But with the turmoil of OpenAI, the corporations have a bigger say on the controls they have over their own products. And it also signals a shift in the company’s ideology, with what The Economist called as a “move from academic idealism to commercial pragmatism.”

In fact, the Openai saga marks the start of a new, more grown-up phase for the ai industry. For Openai, Mr Altman’s triumphant return may supercharge its ambitions. For Microsoft, which stood by Mr Altman in his hour of need, the episode may result in greater sway over ai’s hottest startup. For ai companies everywhere it may herald a broader shift away from academic idealism and towards greater commercial pragmatism. And for the technology’s users, it may, with luck, usher in more competition and more choice.

What could be concerning is how the shift might affect the business decisions of the corporation, and how in turn the AI products they create can harm people. We have seen that Facebook and other social media companies have good intentions at the start, then due to the influence of profit and unintended consequences, cause real harm to society. If OpenAI and other AI-based businesses follow the same path, the damage to reality and perceptions is unthinkable. Just take the case of a British man being put to jail after admitting a chatbot encouraged him to kill the queen. From Wired:

On December 25, 2021, Jaswant Singh Chail entered the grounds of Windsor Castle dressed as a Sith Lord, carrying a crossbow. When security approached him, Chail told them he was there to "kill the queen."

Later, it emerged that the 21-year-old had been spurred on by conversations he'd been having with a chatbot app called Replika. Chail had exchanged more than 5,000 messages with an avatar on the app—he believed the avatar, Sarai, could be an angel. Some of the bot’s replies encouraged his plotting.

In February 2023, Chail pleaded guilty to a charge of treason; on October 5, a judge sentenced him to nine years in prison. In his sentencing remarks, Judge Nicholas Hilliard concurred with the psychiatrist treating Chail at Broadmoor Hospital in Crowthorne, England, that “in his lonely, depressed, and suicidal state of mind, he would have been particularly vulnerable” to Sarai’s encouragement.

As AI becomes more integrated with everyday life, let’s set aside privacy concerns or intellectual property infringement during the AI’s creation. As we trust AI to make more life-changing and consequential decisions, will the AI lean towards morality or more towards its corporate interests even though it knows what the AI is doing will be intentionally harmful?

Based on the precedent with Facebook and Meta, I am not optimistic about AI completely dodging the same mistakes that were being made. Fingers crossed the corporate overlords have these things covered, well at least before the AI overlords do so with humanity.